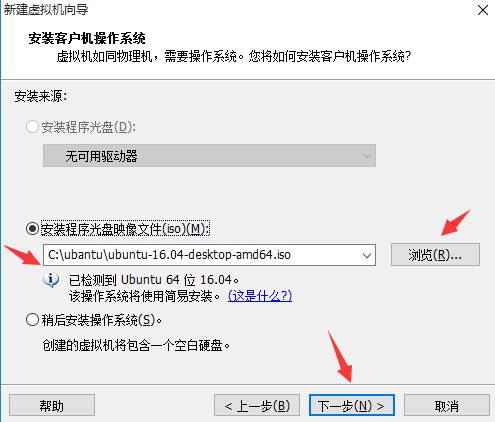

用到的工具:VMware、hadoop-2.7.2.tar、jdk-8u65-linux-x64.tar、ubuntu-16.04-desktop-amd64.iso

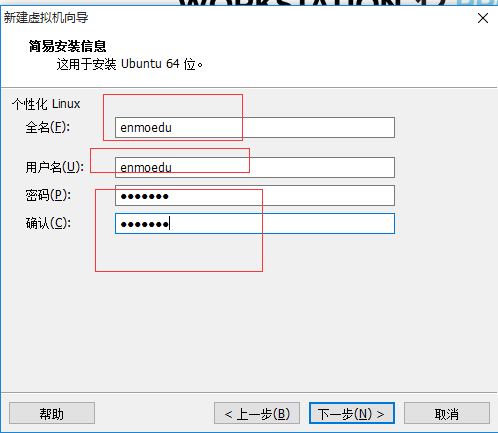

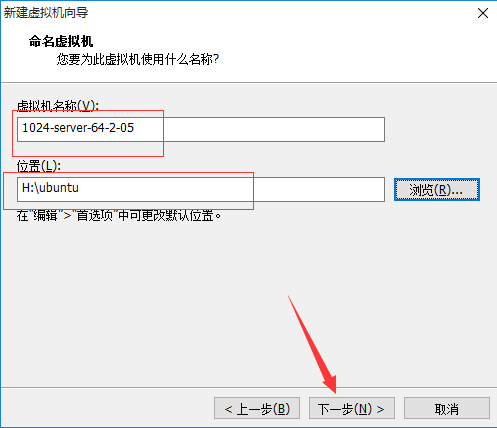

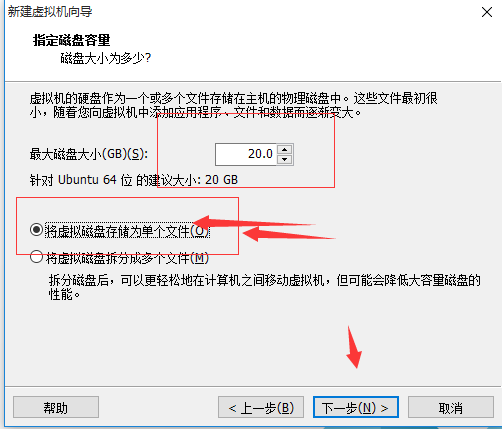

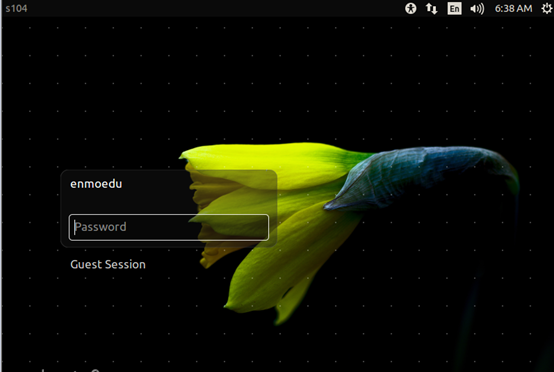

1、 在VMware上安裝ubuntu-16.04-desktop-amd64.iso

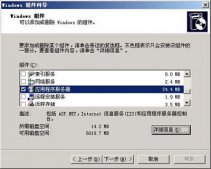

單擊“創(chuàng)建虛擬機”è選擇“典型(推薦安裝)”è單擊“下一步”

è點擊完成

修改/etc/hostname

vim hostname

保存退出

修改etc/hosts

127.0.0.1 localhost

192.168.1.100 s100

192.168.1.101 s101

192.168.1.102 s102

192.168.1.103 s103

192.168.1.104 s104

192.168.1.105 s105

配置NAT網(wǎng)絡(luò)

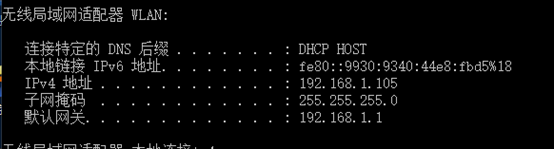

查看window10下的ip地址及網(wǎng)關(guān)

配置/etc/network/interfaces

#interfaces(5) file used by ifup(8) and ifdown(8)

#The loopback network interface

auto lo

iface lo inet loopback

#iface eth0 inet static

iface eth0 inet static

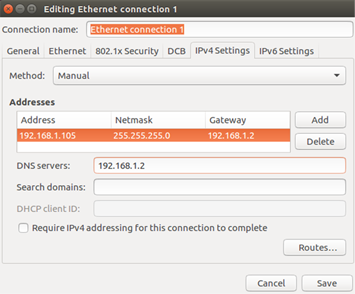

address 192.168.1.105

netmask 255.255.255.0

gateway 192.168.1.2

dns-nameservers 192.168.1.2

auto eth0

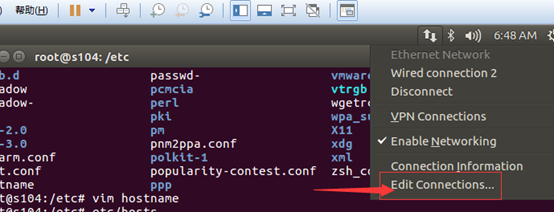

也可以通過圖形化界面配置

配置好后執(zhí)行ping www.baidu.com看網(wǎng)絡(luò)是不是已經(jīng)起作用

當網(wǎng)絡(luò)通了之后,要想客戶機宿主機之前進行Ping通,只需要做以下配置

修改宿主機c:\windows\system32\drivers\etc\hosts文件

文件內(nèi)容

127.0.0.1 localhost

192.168.1.100 s100

192.168.1.101 s101

192.168.1.102 s102

192.168.1.103 s103

192.168.1.104 s104

192.168.1.105 s105

安裝ubuntu 163 14.04 源

$>cd /etc/apt/

$>gedit sources.list

切記在配置之前做好備份

deb http://mirrors.163.com/ubuntu/ trusty main restricted universe multiverse

deb http://mirrors.163.com/ubuntu/ trusty-security main restricted universe multiverse

deb http://mirrors.163.com/ubuntu/ trusty-updates main restricted universe multiverse

deb http://mirrors.163.com/ubuntu/ trusty-proposed main restricted universe multiverse

deb http://mirrors.163.com/ubuntu/ trusty-backports main restricted universe multiverse

deb-src http://mirrors.163.com/ubuntu/ trusty main restricted universe multiverse

deb-src http://mirrors.163.com/ubuntu/ trusty-security main restricted universe multiverse

deb-src http://mirrors.163.com/ubuntu/ trusty-updates main restricted universe multiverse

deb-src http://mirrors.163.com/ubuntu/ trusty-proposed main restricted universe multiverse

deb-src http://mirrors.163.com/ubuntu/ trusty-backports main restricted universe multiverse

更新

$>apt-get update

在家根目錄下新建soft文件夾 mkdir soft

但是建立完成后,該文件屬于root用戶,修改權(quán)限 chown enmoedu:enmoedu soft/

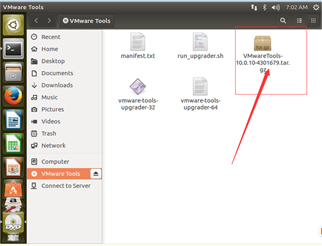

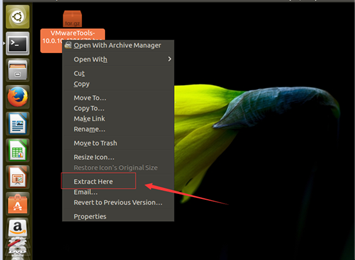

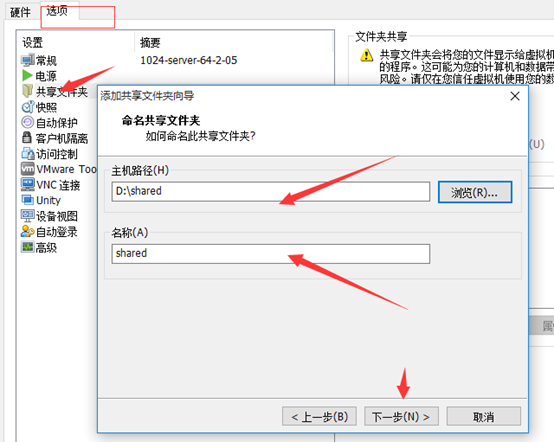

安裝共享文件夾

將該文件放到桌面,右鍵,點擊“Extract here”

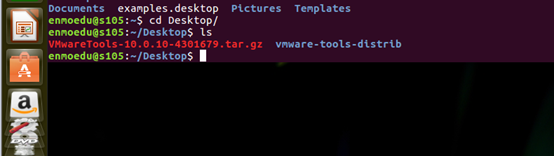

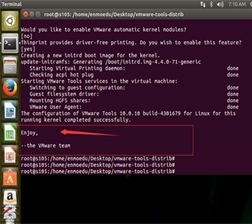

切換到enmoedu用戶的家目錄,cd /Desktop/vmware-tools-distrib

執(zhí)行./vmware-install.pl文件

Enter鍵執(zhí)行

安裝完成

拷貝hadoop-2.7.2.tar、jdk-8u65-linux-x64.tar到enmoedu家目錄下的/Downloads

$> sudo cp hadoop-2.7.2.tar.gz jdk-8u65-linux-x64.tar.gz ~/Downloads/

分別解壓hadoop-2.7.2.tar、jdk-8u65-linux-x64.tar到當前目錄

$> tar -zxvf hadoop-2.7.2.tar.gz

$>tar -zxvf jdk-8u65-linux-x64.tar.gz

$>cp -r hadoop-2.7.2 /soft

$>cp -r jdk1.8.0_65/ /soft

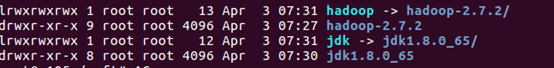

建立鏈接文件

$>ln -s hadoop-2.7.2/ hadoop

$>ln -s jdk1.8.0_65/ jdk

$>ls -ll

配置環(huán)境變量

$>vim /etc/environment

JAVA_HOME=/soft/jdk

HADOOP_HOME=/soft/hadoop

PATH="/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin:/usr/games:/usr/local/games:/soft/jdk/bin:/soft/hadoop/bin:/soft/hadoop/sbin"

讓環(huán)境變量生效

$>source environment

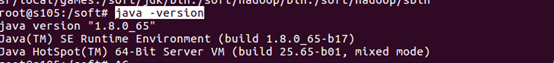

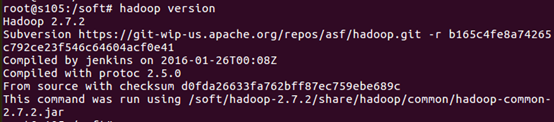

檢驗安裝是否成功

$>java –version

$>hadoop version

配置/soft/hadoop/etc/hadoop/ 下的配置文件

[core-site.xml]

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://s100/</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/home/enmoedu/hadoop</value>

</property>

</configuration>

[hdfs-site.xml]

<configuration>

<property>

<name>dfs.replication</name>

<value>3</value>

</property>

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>s104:50090</value>

<description>

The secondary namenode http server address and port.

</description>

</property>

</configuration>

[mapred-site.xml]

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

</configuration>

[yarn-site.xml]

<configuration>

<property>

<name>yarn.resourcemanager.hostname</name>

<value>s100</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

</configuration>

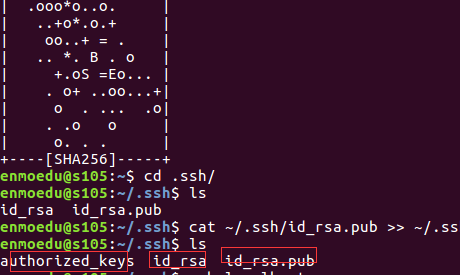

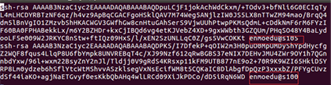

配置ssh無密碼登錄

安裝ssh

$>sudo apt-get install ssh

生成秘鑰對

在enmoedu家目錄下執(zhí)行

$>ssh-keygen -t rsa -P '' -f ~/.ssh/id_rsa

導(dǎo)入公鑰數(shù)據(jù)到授權(quán)庫中

cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

測試localhost成功后,將master節(jié)點上的供鑰拷貝到授權(quán)庫中

其中root一樣執(zhí)行即可

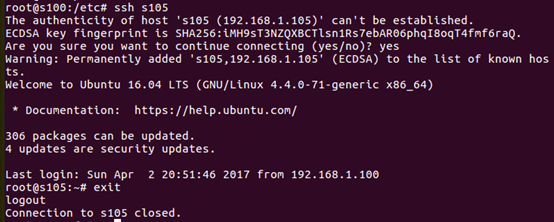

$>ssh localhost

從master節(jié)點上測試是否成功。

修改slaves文件

[/soft/hadoop/etc/hadoop/slaves]

s101

s102

s103

s105

其余機器,通過克隆,修改hostname和網(wǎng)絡(luò)配置即可

塔建完成后

格式化hdfs文件系統(tǒng)

$>hadoop namenode –format

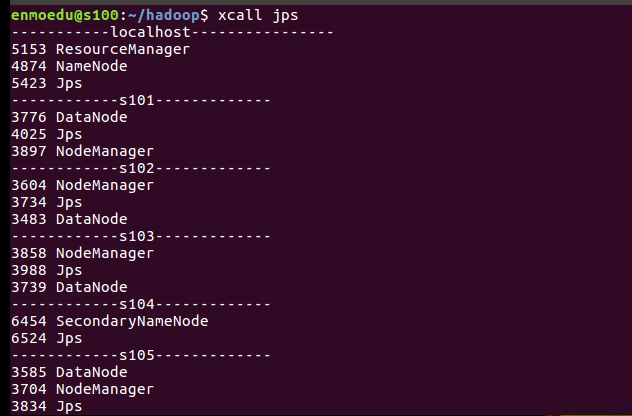

啟動所有進程

start-all.sh

最終結(jié)果:

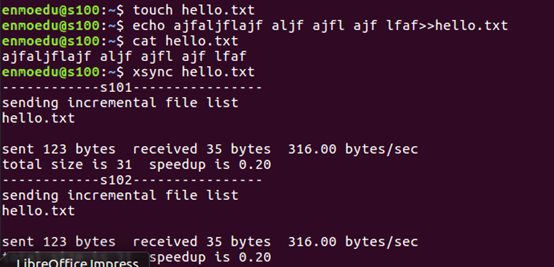

自定義腳本xsync(在集群中分發(fā)文件)

[/usr/local/bin]

循環(huán)復(fù)制文件到所有節(jié)點的相同目錄下。

[usr/local/bin/xsync]

#!/bin/bash

pcount=$#

if (( pcount<1 ));then

echo no args;

exit;

fi

p1=$1;

fname=`basename $p1`

#echo $fname=$fname;

pdir=`cd -P $(dirname $p1) ; pwd`

#echo pdir=$pdir

cuser=`whoami`

for (( host=101;host<106;host=host+1 )); do

echo ------------s$host----------------

rsync -rvl $pdir/$fname $cuser@s$host:$pdir

done

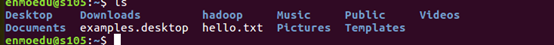

測試

xsync hello.txt

自定義腳本xcall(在所有主機上執(zhí)行相同的命令)

[usr/local/bin]

#!/bin/bash

pcount=$#

if (( pcount<1 ));then

echo no args;

exit;

fi

echo -----------localhost----------------

$@

for (( host=101;host<106;host=host+1 )); do

echo ------------s$host-------------

ssh s$host $@

done

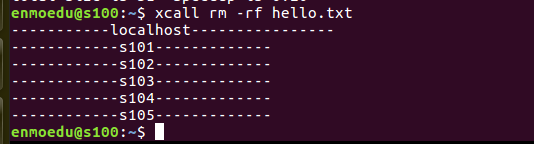

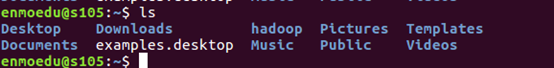

測試 xcall rm –rf hello.txt

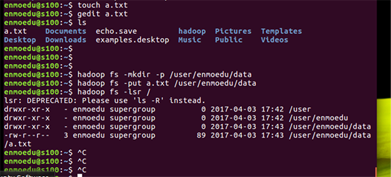

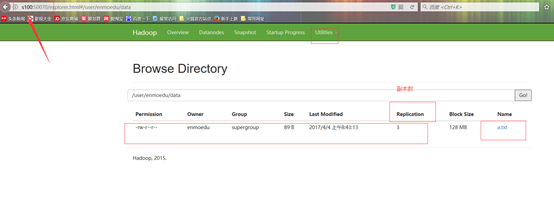

集群搭建完成后,測試次運行以下命令

touch a.txt

gedit a.txt

hadoop fs -mkdir -p /user/enmoedu/data

hadoop fs -put a.txt /user/enmoedu/data

hadoop fs -lsr /

也可以進入瀏覽器查看

以上就是本文的全部內(nèi)容,希望對大家的學(xué)習(xí)有所幫助,也希望大家多多支持服務(wù)器之家。

原文鏈接:http://www.cnblogs.com/cpyj/p/6664113.html?utm_source=tuicool&utm_medium=referral